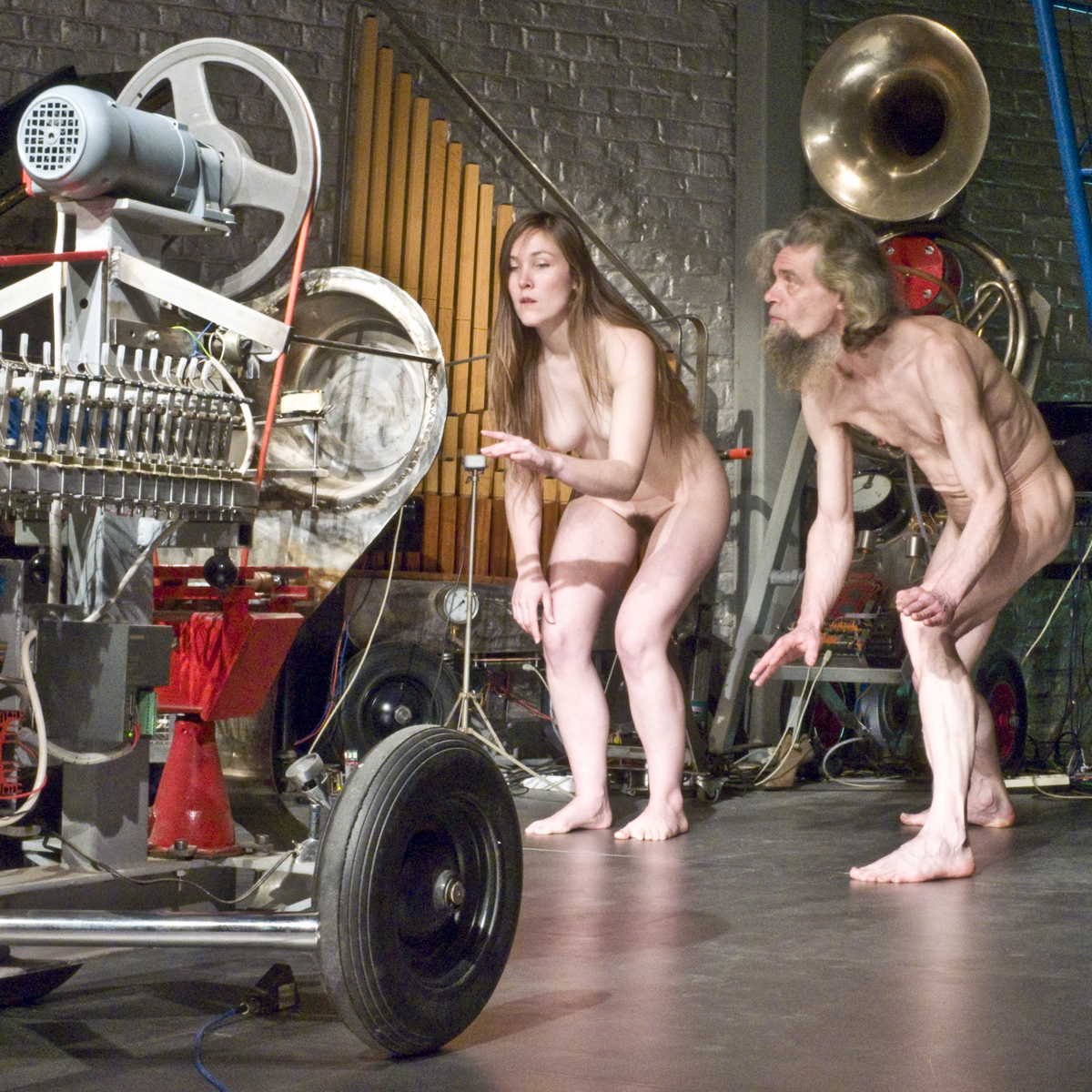

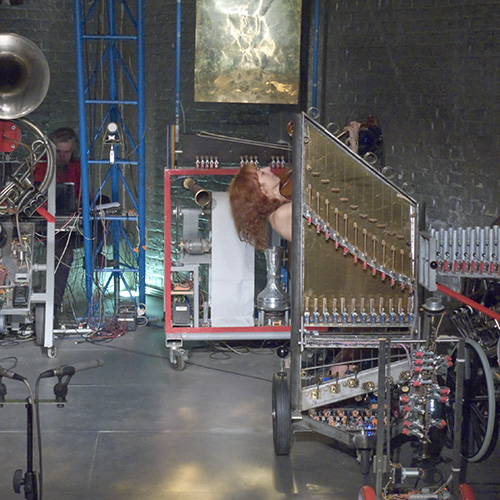

This suite of compositions was started in 2010 and the number of sections and components is increasing as we are building more robots for the M&M orchestra as well as adding more software based modules for gesture recognition. All studies are designed to be completely interactive and receiving their inputs from one or more dancers. The compositions make use of the authors invisible instrument based on sonar and microwave doppler shifts caused by the moving and reflective body. For this reason, the performer has always to be naked. The hardware is fully described in our article on the ii2010 invisible instrument (Holosound) and details on our gesture recognition system in another extensive article on Namuda gesture analysis.

The automats that can be played are selected from the complete catalogue of musical robots realised sofar.

These robots constitute almost the entire robot orchestra built by the author up to the end of the year 2011. The setup on stage takes at least 120 square meters. The naked dancer(s) should be placed central, surrounded by the automats.

Namuda forms in fact a collection of pieces that can be performed alone or in a sequence, as a suite. The 'scores' are completely embedded into the software. The choreography is laid down in notes and descriptive plots.

Namuda Study #1: "Links" (april 2010) - duration 10'

Gestural particles recognized in this choreographed study: Implosion/Explosion, Freeze, Speedup/Slowdown, Fluency, Constancy of speed, Collision, Theatrical Collision. The recognition of these elementary shapes form the base of the composition. Timing resolution is better than 10ms. In one section of the piece a spectral transform is applied to the gesture data stream, reflecting the gesture shape very well. The results of the transform are mapped on the overtone series on our <Hurdy> robot, each of the two movement vectors on a different string. The hardware platform used is ii2010, using omnidirectional MEMS sensors. Details are published by the author in a separate article. Hardware as well as software are developed completely by the author using the PowerBasic programming language on the Intel/Windows platform.

'Links" was originally written to be performed as a duett with the author and Dominica Eyckmans, but due to the vulcanic ashes that made air transportation impossible -she was lost somewhere on Rhodos- , the piece was premiered with a. rawlings on april 20th of 2010.

Herewith some snapshots taken during the performance:

Namuda Study #2: "Poloyv.i.'s" (may 2010) - duration 7'

In this study only a very small subset of the recognisable gestural particles are used. In casu, the dipole Speedup/Slowdown and in the introduction only, the fluency gesture. The hardware platform used is ii2010, using omnidirectional MEMS sensors. Details are published by the author in a separate article. Hardware as well as software are developed completely by the author using the PowerBasic programming language on the Intel/Windows platform.

'Polyv.i.'s" was originally written to be performed by a dancing viola player, in this case Dominica Eyckmans. The piece was premiered by her on may 20th of 2010.

Namuda Study #3: "Collis" (may 2010) - duration 8'

This study uses mostly a single gesture, collision, and exploits in full the recognition conditions for this gestural property. As an extension, it also introduces the recognition of jumps. It was written to be performed by Lazara Rosell Albear, a Cuban dancer and percussionist. The instrumentation makes use of all the percussive robots in the M&M orchestra augmented with the lower brass instruments for the jumps. The hardware platform used is again ii2010, using omnidirectional MEMS sensors. Details are published by the author in a separate article. Hardware as well as software are developed completely by the author using the PowerBasic programming language on the Intel/Windows platform.

'Collis" was premiered on may 20th of 2010 by L.R. Albear

A photoshoot of the 'Robozara' production , july 22th, 2010 by Bart Gabriel can be found here.

Namuda Study #4: "Robodomi" (july 2010) - duration 15'

This study, written for Dominica Eyckmans, uses the combined recognition of the gestural prototypes edgy, smooth, fluent, speedup and slowdown.

A small photoshoot by Bart Gabriel can be found here.

Namuda Study #5: "RoboGo" (july 2010) - duration 14'

This study, written to be performed by the author, uses the combined recognition of concatenations of the gestural prototypes.

More pictures can be found in the small photoshoot by Bart Gabriel.

Namuda Study #6: "RoboEmi" (july 2010) - duration 15'

This study, written by Kristof Lauwers to be performed by Emilie De Vlam uses the namuda gesture recognition engine. Photoshoot by Bart Gabriel.

Namuda Study #7: "RoboBomi" (september 2010) - duration 10'

This study uses the namuda gesture recognition engine to create a serial composition in real time scored for a very limited subset of our robot orchestra. <Bomi> -the main voice- with accompaniment voices scores for <Toypi> and <Aeio>, all controlled by a viola playing dancer. The piece was written for Dominica Eyckmans and premiered on september 16th at the Logos Foundation. Here some pictures of this performance:

Namuda Study #8: "Features" (october 2010) - duration 6'

This study uses the namuda gesture recognition engine to create a composition in real time scored for a very limited subset of our robot orchestra. In particular the newly added soundsources in robots such as <Thunderwood>, <Simba>, <Bomi> and the <Piano-Pedal> come into view. The piece was written for Dominica Eyckmans and was premiered on october 20th at the Logos Foundation. Main gesture properties used here are edgyness and speedup. Compositional structures are based on the number 10 and 20.

Namuda Study #9: "Zwiep & Zwaai" (november 2010) - duration 6'30"

This study uses the namuda gesture recognition engine to create a composition in real time scored for the subset of our robot orchestra characterized by their capibility to move visibly. Hence the moving robots <Korn> and <Ob> are getting the most important role. But <Puff>'s eyes, <Springers> shakers, <Simba>'s arms as well as <Thunderwood>'s windmachine also come into scope. The piece was written for Dominica Eyckmans and was premiered on november 17th at the Logos Foundation. Main gesture properties used here are expansion, freezing, collission. Compositional structures are merely based on scales.

Namuda Study #10: "Icy Vibes" (december 2010) - duration 7'00"

This study exploits a few new features added into our <Vibi> robot: precize modulation of the resonator wheels as well as its LED stroboscopic lights. It uses the namuda gesture recognition engine to steer the compositional structure of the interaction between <Vibi> and <Bomi>, <Piperola> and <Harma>. The study is scored for a dancing viola player, Dominica Eyckmans and was premiered on december 16th at the Logos Foundation. Main gesture properties used here are collission, theatrical collission, edgyness and smoothness.

Namuda Study #11: "Prime 2011" (january 2011) - duration minimum 6'40" , maximum 33'00"This rather extensive study is based on the numerical analysis of the prime number 2011: it's the sum of the successive series of 11 prime numbers: 157, 163, 167, 173, 179, 181, 191, 193, 197, 199 and 211. Moreover, it is at the same time the sum of three successive prime numbers: 661, 673, 677. The global architecture of the piece is based on these prime numbers. The details are made to be interactive and make use of the Namuda gesture properties. The harmonic structure is entirely based on spectral distributions of slowly expanding irrational overtone series. The study fully exploits the quartertone and microtonal possibilities of the robot orchestra. The mappings of gestural properties on musical robots is as follows:

- smooth gestures @ Qt

- fluent gestures @ Ob

- constant speed gestures @ Korn

- edgy gestures @ Xy

- accellerating gestures @ Bono

- slowdown gestures @ Bomi

- shrinking gestures @ Puff

- exploding gestures @piano

- collision @ Vacca, Vitello, Belly

- theatrical collision @ Toypi

- gestural freeze @ Bourdonola

Prime number derived markers, independent from gestures, are confined to <Trump>, <Krum>, <Vox Humanola>, <Snar>, <Llor>, <Springers>, <Vibi> and <Tubi>. The piece was premiered by Dominica Eyckmans on january 19th 2011. The piece can be performed by one or two dancers.

Namuda Study #12: "Poly-a" (february 2011) - duration minimum 6'40" , maximum 10'00"

This study is about polyphony and involves a performer playing the viola, singing and dancing all at the same time. In top of these 3 voices of polyphony, another set of 3 voices is generated interactively and mapped on instruments of the robot orchestra: <Aeio>, <Vibi>, <Bomi>, <Bourdonola>, <Toypi>, <Puff>, <Qt>, <Xy> . The display on <So> is used to communicate and cue the performer. The counterpoint makes use of rules derived from slowly shifting irrational spectral harmony. The thematic material is derived from a simple note series:

The composition was be premiered on the M&M concert of february 10th, at the Logos Foundation Tetrahedron Hall. The dancer/vocalist/viola player was Dominica Eyckmans.

Namuda Study #13: "AI" (march 2011) - duration minimum 5'00" , maximum 10'00"

This study is about artificial intelligence and thus its software is an implementation thereoff. It uses to a great extend, information processing of the past of the piece and thus becomes capable of analysing its own musical context in real time. The study not only uses our gesture interfaces, but also takes into account the acoustic input from the performer on the viola as well as the sounds produced by the orchestra itself. The performer can play an instrument, sing and dance all at the same time.

The composition was premiered on the M&M concert of march 15th, at the Logos Foundation Tetrahedron Hall. The dancer/vocalist/viola player was Dominica Eyckmans.

Namuda Study #14: "Miked" (april 2011) - duration minimum 3'00" , maximum 6'00"

This study for a singing/rapping dancer with a handheld microphone. It ought to be a parody on typical pop-music singers 'seductive' gesture. The singers vocal utterances are spectrally mapped on Qt, the quartertone organ, such that we can clearly hear Qt to speak. The singers gestures are as well submitted to fast Fourier transforms and in their three vectors spectrally mapped on the robots <Harma>, <Xy>, <Puff>, <Piperola> and <Bourdonola>. use other robots in the orchestra.

The composition was premiered on the M&M concert of april 4th, at the Logos Foundation Tetrahedron Hall. The dancer/vocalist was Dominica Eyckmans.

Namuda Study #15: "Early Birds" (may 2011) - duration minimum 3'00" , maximum 5'00"

This study was written at the occasion of the preliminary introduction of our <Fa> robot -an automated bassoon- in the robot orchestra. Two main gesture properties, speedup and slowdown -a dipole- are mapped onto the output of a single monophonic instrument. All controls possible on the bassoon robot are made to be controlled by parameters derived from the gesture analysis.

The composition was premiered on the M&M concert of may 19th, at the Logos Foundation Tetrahedron Hall. The dancer/viola player was Dominica Eyckmans.

Namuda Study #16: "Lonely Tango" (june 2011) - duration 6'30"This study was written at the occasion of the tango concert and dance production in june 2011. It makes use of tango music, interactively combined with the gesture sensing technology. It's a fully choregraphed piece to be performed by a tango couple, one of which also plays the viola. The performance was premiered by the author and Dominica Eyckmans.

Namuda Study #17: "SpiroBody" (september 2011) - duration 6'00"This study was written at the occasion of the introduction of our <Spiro> robot -an automated spinet (small harpsichord)- in the robot orchestra. All controls possible on the spiro robot are made to be controlled by parameters derived from the gesture analysis.

The 'Namuda' project on gesture control as well as the development of the robot orchestra are post doctoral research projects with the support of the Ghent University Association, School of Arts